Final Submission

"Problem statement"

Our app is geared towards improving the dataset in Radiology domain. We provide gui interface to visualise the inferences and modify the inference output. This helps in increasing and improving the dataset. To summarise ML domain in Andrej Karpathy's words "Data is king" and we are making the data even better.

"Dataset"

The dataset that we worked on is NIH 14 Chest-xrays. The dataset has 112,000 images of over 30,000 distinct patients. But for Object detection it has annotation data for just 900 images. Which to train for medical purpose is just unusable. So we decided we will start with this dataset.

"Web App flow" [ Frontend ]

Here is the flow of the app:

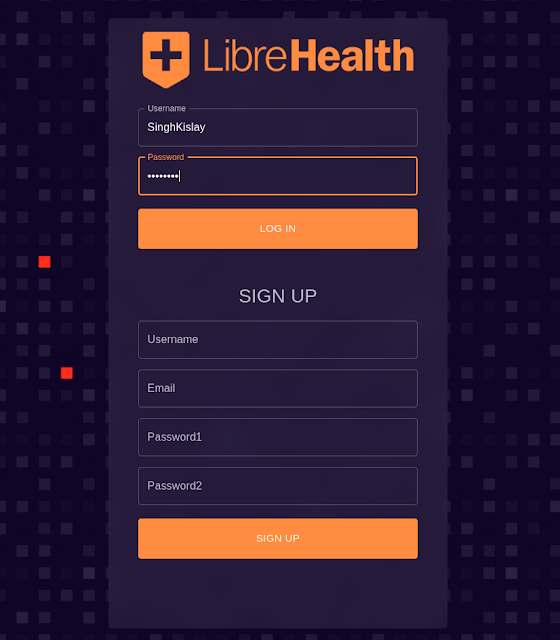

Sign Up/ Sign In

Get Image and start annotations:

Things we can do:

- Select the Deep Learning model from which you want your annotations from

- Add more bounding boxes

- Remove bounding boxes

- Change the label of bounding boxes

- Add more labels to the label list

- Switch to segmentation

- Add segmentation label manually

- hit save on the segmentation and bbox to save the labels to the backend

- In the end you can download the bbox or segmentation data in CSV format to further train and improve the model.

Link to repository: lh-radiology-semi-automatic-image-annotator

Link to Commits

Link to Django API Docs

Link to LibreHeath Forum

"Architecture Diagram" [ Backend ]

"Work Status"

The initial goal for the app in my proposal was to build a gui where user can modify object detection bounding box coming from inference of multiple models. As the summer progressed we decided we wanted to add image segmentation feature as well. So far it is all completed.

TO DO:

1) Segmentation tool right now can only be used for annotations not visualisation.(unlike the bounding box tool)

2) Add more deep learning models to our app.(We plan to keep adding more and better models in the future)

3) Add support for more datasets.

4) Finalise a strategy to host.

tm

ReplyDelete